Almaz Ohene reports on how real-life structures of oppression are being replicated online through automated moderation and censorship

The weekend of Saturday 6 June 2020 saw the first wave of Black Lives Matter protests in the UK, which has forced the country to face up to the ubiquitous and pervasive structural oppression that Black and Indigenous People of Colour (BIPOC) face worldwide.

That weekend also saw a spate of Facebook adverts run from the official Conservative Party page about a supposed Immigration Bill survey.

The two events are related, but we’ll come to that later.

Within a few hours of the appearance of the adverts, left-wingers began to counter by re-posting the link to their own social channels along with a copy and paste template. One such accompanying message read: “Tories carrying out a survey into their immigration proposals but apparently only sharing it on their algorithms, so their supporters’ views are overrepresented.”

The reality of inherent algorithmic bias means that the implementation of community guideline policies, which are meant to protect users from discrimination, are harming the very groups that need protection.

And another: “If you disagree with the Tory Immigration Bill then PLEASE fill in this survey. No surprise but this is only showing up to party supporters/algorithms to those who align, trying to gauge support.”

Despite left-wingers’ sincere attempts to unskew the survey results, there was in fact no need to interact with the advert at all as there was no official Government consultation.

Chloe Green, Labour’s social media manager from 2016 to 2019, saw the frantic rates at which the counter-message was being shared online and waded in to stamp out the proliferation of misinformation.

“We don’t have a great public understanding of political process or political comms in our country,” she says. “So, something like this, which to a discerning eye, is so evidently not a Government official consultation, was thought to be one by potentially tens of thousands of people. It also concerns me that people didn’t necessarily utilise critical reasoning when they looked at this. We [the left] are so desperate to be active participants in anti-racist work online that we are taking things that are not genuine activists’ efforts and trying to spin them, so they feel like they’re useful.”

Algorithmic Punishments

Marketing algorithms is the backbone of building lobbying databases lists. Probability scores are produced which automatically assess how likely an internet user is to click on an advert. Algorithmic models are also deployed on a huge scale to atomically moderate the content posted to for social media platforms such as Facebook, Instagram, YouTube and TikTok.

Over the past few years, conservative values have swept across the online algorithmic landscape. One example of this is the global fallout from the US SESTA/FOSTA Bill in 2018, which has seen social media sites blanket-ban certain kinds of content.

Facebook’s algorithmic community guides moderation, for example, has now been programmed to spot commonly used emoji strings – such as the eggplant or peach emoji – which are commonly used to infer sex acts or a sexuality. YouTube has a similar policy regarding its deprioritisation content which contains LGBTQ vocabulary. The corporation faces consistent criticism for its demonetisation policies, which frequently target marginalised communities. Essentially, this means that professional queer education activists lose out on income.

Research by Salty, an online newsletter and platform for women, trans and non-binary people, in 2019 reported that that plus-sized profiles were often flagged on Instagram for “excessive nudity” and “sexual solicitation”, and concluded that “risqué content featuring thin, cis white women seems less censored than content featuring plus-sized, black, queer women”.

In March, it was revealed that the makers of TikTok instructed moderators to suppress posts created by users deemed too “ugly”, “poor”, or “disabled” for the platform. Two documents obtained by The Intercept show how the algorithms are programmes to effectively punish particular demographics of TikTok users by artificially narrowing their audiences.

The documents – which appear to have been originally drafted in Chinese and later imperfectly translated into English for use in TikTok’s global offices – lay out bans for ideologically undesirable content in live streams and describe algorithmic punishments for unattractive and impoverished users.

Meanwhile, here in the UK, civil servants have been redrafting the guidelines of Ofcom – the communications regulator – to offer greater protection against online harms. The new regulatory model, published in April 2019, states: “The approach proposed in this White Paper is the first attempt globally to tackle this range of online harms in a coherent, single regulatory framework.”

However, a source within the Civil Service, who contributed to the definitions and recommendations for the online harms remit, responded: “As far as I can tell, the idea will be to start requiring platforms to enforce their own terms of service and also to set some new model terms. I haven’t seen anything about trying to make the existing terms less discriminatory.”

Policing Bodies and Online Harms

This is damning. The reality of inherent algorithmic bias means that the implementation of community guideline policies, which are meant to protect users from discrimination, are harming the very groups that need protection.

The report by Salty also found that people who come under attack for identifying as a member of the LGBTQ+ community, for example, have had their accounts reported or banned instead of the attacker.

Swedish erotic film director, screenwriter and producer Erika Lust has an Instagram following of 355,000. The company’s Instagram channel is careful to adhere to Instagram’s community guidelines. However, the brand has found that Instagram flags content on its channel that is technically compliant far more often than accounts such as Dan Bilzerian, for example, which features sexuality suggestive content, whereby Caucasian women who appeal to the heteronormative ideal are the focal point.

“Algorithmic bias happens to affect even more marginalised communities such as queer people, plus-sized bodies, and BIPOC bodies,” Lust says. “All female bodies are generally hyper-sexualised on the media however, research shows that BIPOC women’s bodies are policed even more than their white, straight, cis counterparts for being ‘sexually suggestive’.

“This clearly implies that these bodies are considered inherently sexual, whether they are actually engaging in sex acts or not, which reinforces misogyny and racism both online and offline. Our society already strictly scrutinises marginalised people’s bodies, that’s why we need to reverse this trend on these platforms. Not only because they are supposed to be an equal and free space for everyone, but especially because we are all witnessing how social media debates have a huge impact on how we look at social change.”

Within the tech industry, it is homogeneity which enforces bias and its root cause is an in-built demographic hierarchy.

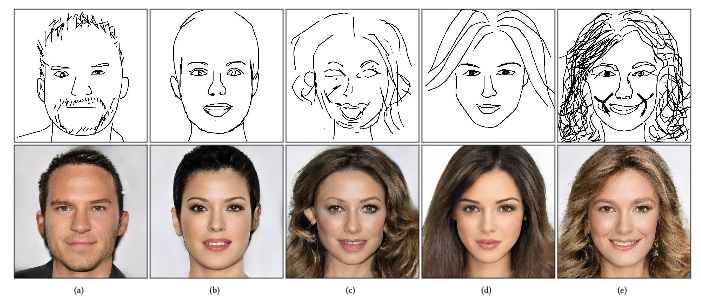

Further proof of this came in June 2020, when a series of Twitter threads by machine learning scholar Robert Osazuwa Ness showed how current pixel scanning machine learning models default to creating Caucasian faces, even when the source material features people of colour.

Commentators on the thread went on to explain that the underlying problem with the model was that the large-scale image data-set of 30,000 faces used – called ‘CelebAMask-HQ’ – is in itself “extremely biased to good-looking white people”.

Although the mainstream now seems to be aware of the connection between right-wing marketing ploys which harness existing online algorithms to play on the fears of middle England, it has been slow to make the connection between oppressive structures that disempower and endanger BIPOC in real life and how they are being replicated in online spaces too.

Only by addressing the fact that online spaces suppress the lived experiences of marginalised communities via inherent algorithmic bias can we collectively begin to build online communities that are truly indiscriminate, inclusive and safe for all.

Facebook, Instagram, TikTok and YouTube were contacted for comment, but did not respond.